MPS facial recognition system inaccurate in four out of five cases

The Metropolitan Police Service (MPS) has attacked the findings of a report it commissioned after independent researchers urged the force to stop testing facial recognition technology

Academics from the University of Essex found that four out of five people identified as possible suspects were innocent.

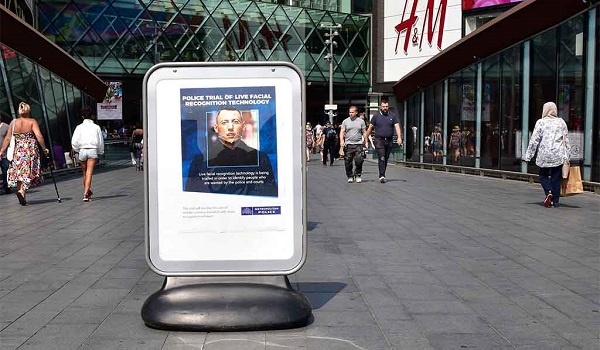

They were granted access to six live facial recognition (LFR) system trials by the MPS which took place in Soho, Romford and at the Westfield shopping centre in Stratford, east London.

They found the system was 81 per cent inaccurate – meaning that it regularly misidentified people who were then wrongly stopped. In total, the technology made only eight out of 42 matches correctly across six trials evaluated.

However, MPS Deputy Assistant Commissioner Duncan Ball said the force was “extremely disappointed with the negative and unbalanced tone” of the report – insisting the pilot had been successful.

Mr Ball added: “This is new technology, and we’re testing it within a policing context. The Met’s approach has developed throughout the pilot period, and the deployments have been successful in identifying wanted offenders. We believe the public would absolutely expect us to try innovative methods of crime fighting in order to make London safer.”

The MPS prefers to measure accuracy by comparing successful and unsuccessful matches with the total number of faces processed by the facial recognition system. According to this metric, the error rate of the six trials was just 0.1 per cent.

The report, commissioned by the MPS and released to The Guardian and Sky News, also warned of “surveillance creep”, with the technology being used to find people who were not wanted by the courts, and they warned it was unlikely to be justifiable under human rights law, which protects privacy, freedom of expression and the right to protest.

The research also highlighted concerns over criteria for the watch list as information was often not current, which saw officers stopping people whose cases had already been concluded.

The report’s authors, Professor Peter Fussey and Dr Daragh Murray, called for future live trials of LFR to be ceased until the concerns are addressed.

Dr Murray said: “This report raises significant concerns regarding the human rights law compliance of the trials. The legal basis for the trials was unclear and is unlikely to satisfy the ‘in accordance with the law’ test established by human rights law.

“It does not appear that an effective effort was made to identify human rights harms or to establish the necessity of LFR. Ultimately, the impression is that human rights compliance was not built into the Metropolitan Police’s systems from the outset, and was not an integral part of the process.”

The University of Essex researchers also raised concern about potential bias, citing US research in 2018 into facial recognition software provided by IBM, Microsoft and Face++, a China-based company, which found the programmes were most likely to wrongly identify dark-skinned women and most likely to correctly identify light-skinned men.

Use of the facial recognition is currently under judicial review in Wales following the technology’s first ever legal challenge, brought against South Wales Police by Liberty.

Hannah Couchman, policy and campaigns officer for the civil rights group, said: “This damning assessment of the Met’s trial of facial recognition technology only strengthens Liberty’s call for an immediate end to all police use of this deeply invasive tech in public spaces,” she said.

South Wales Police argued during the hearing at the Cardiff civil justice and family centre that the cameras prevented crime, protected the public and did not breach the privacy of innocent people whose images were captured.

Speaking at the end of the hearing Deputy Chief Constable Richard Lewis said: “This process has allowed the court to scrutinise decisions made by South Wales Police in relation to facial recognition technology. We welcomed the judicial review and now await the court’s ruling on the lawfulness and proportionality of our decision making and approach during the trial of the technology.

“The force has always been very cognisant of concerns surrounding privacy and understands that we, as the police, must be accountable and subject to the highest levels of scrutiny to ensure that we work within the law.”

The case has been adjourned and two judges will make a ruling at a later date yet to be fixed.

Last week body-worn video camera manufacturer Axon announced it would not be adding facial recognition technology to its products in the foreseeable future after a year-long investigation by its own ethics board concluded the technology was: “not currently reliable enough to ethically justify its use”.